Building a Self-Healing AI Data Quality Platform: Case Study

Enterprise systems are only as reliable as the data flowing through them. CRMs, ERPs, APIs, ingestion pipelines, and internal applications continuously generate and modify records but without structured data quality management, inconsistencies compound rapidly.

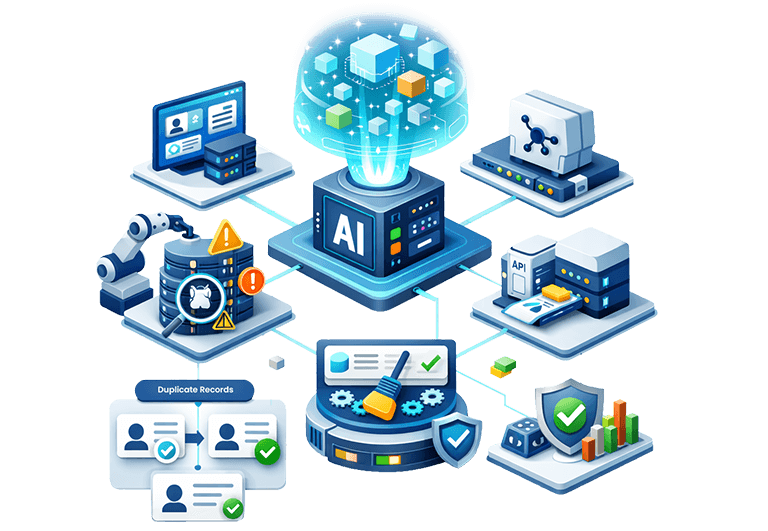

Our client struggled with systemic data degradation across millions of records. Infomaze engineered a self-healing ecosystem powered by AI data cleansing, advanced validation logic, and automated data deduplication services embedded directly into operational pipelines.

This case study shows how we transformed fragmented datasets into continuously validated, high-quality data using scalable data cleaning and intelligent duplicate removal.

Client Overview

The client operates a distributed enterprise application ecosystem consisting of multiple transactional databases (SQL and NoSQL), CRM and internal operational systems, third-party API integrations, and both batch and real-time ingestion pipelines.

With millions of entity records, continuous daily ingestion, and multiple write sources, the absence of a centralized validation layer created growing reliability concerns. The primary objective was to establish an automated, centralized data quality framework without disrupting existing production systems while enabling scalable data quality management across platforms.

Enterprise Data Quality & Deduplication Challenges

Modern distributed systems create complexity. Below are the key challenges our client faced:

Uncontrolled Data Ingestion

Our client was facing data quality challenges as data entered the system via APIs, imports, and manual forms often bypassed validation logic, leading to inconsistent records at the source and weakening overall CRM data integrity.

Schema Drift Between Systems

Identical attributes were stored in inconsistent formats such as phone numbers as integers or strings, country names vs ISO codes and mixed casing. This causes downstream transformation failures.

Entity Duplication Across Platforms

Exact matching failed due to spelling variations, partial addresses, and alternate company names, resulting in fragmented customer identity and ineffective duplicate record removal.

High Volume of Invalid Communication Data

Large volumes of syntactically incorrect emails, disposable domains, and inactive contacts reduced campaign effectiveness and complicated data deduplication services.

Missing Critical Attributes

Null or inconsistent required fields caused analytics pipeline breakdowns, reduced reporting accuracy, and increased manual correction overhead.

Absence of Continuous Quality Monitoring

Data quality checks occurred only during reporting cycles, not during ingestion or updates, which prevents proactive data quality management.

AI-Powered Data Cleaning & Deduplication Solutions

To address these enterprise-scale issues, Infomaze designed a layered architecture combining automation, ML intelligence, and scalable data standardization solutions. Instead of reactive cleanup, we embedded proactive AI data cleansing directly into the data lifecycle.

We inserted a preprocessing service within ingestion pipelines, deployed as a containerized microservice that intercepts payloads before persistence.

Instead of modifying core transactional systems, we implemented:

- API gateway interceptors

- Middleware transformation layers

- Batch preprocessing hooks

Engineering Implementation

NLP-Powered Correction Models

We trained contextual similarity models using token embeddings to detect spelling inconsistencies beyond simple dictionary matching. This allowed correction of near-miss spellings in names, addresses, and company fields.

Hybrid Regex + ML Validators

Deterministic regex handled structural checks (phone format, email pattern), while ML models handled contextual anomalies.

Case Normalization Enforcement

A rule engine enforced PascalCase / Title Case during ingestion, preventing casing drift at the database level.

This strengthened upstream data cleaning services while reducing downstream reconciliation overhead.

We built a standalone email validation microservice accessible via:

- REST APIs (real-time ingestion validation)

- Message queue consumers (batch processing)

This decoupled email verification from core systems.

Implementation

RFC-Compliant Parsing Engine

Built using standardized email parsing libraries with fallback validation logic.

MX Record Verification Layer

Integrated DNS query resolution modules to validate domain-level authenticity during ingestion.

Disposable Domain Intelligence

Maintained continuously updated domain blacklists stored in distributed cache layers.

Deliverability Scoring Model

Implemented probabilistic scoring combining syntax, domain validity, and historical bounce signals.

This significantly elevated enterprise CRM data cleaning quality and reduced campaign inefficiencies.

We replaced deterministic matching with a probabilistic entity resolution engine deployed as:

- Batch clustering jobs (large-scale reconciliation)

- Real-time similarity scoring APIs (incremental updates)

All duplicate logic ran outside transactional databases to avoid performance degradation.

Implementation

Weighted Multi-Field Similarity Engine

Each attribute (name, email, phone, address) received configurable weights stored in metadata configuration tables.

Fuzzy Matching Algorithms

We integrated Levenshtein distance, Jaro-Winkler similarity and Phonetic encoding (Soundex / Metaphone).

Clustering Models

Used graph-based clustering to group similar records into identity clusters.

Golden Record Selection Framework

Confidence hierarchy logic prioritized:

- Most complete record

- Most recently updated

- Most trusted source

This enterprise-grade data deduplication services architecture enabled scalable duplicate record removal across millions of records.

We designed a canonical schema registry acting as a transformation contract between systems.

Instead of altering legacy schemas, we built transformation adapters, mapping configuration layers and ETL transformation middleware.

Engineering Implementation

Address Parsing Pipelines

Integrated third-party parsing libraries with fallback heuristic engines for incomplete addresses.

Phone Formatting Engine

Converted all numbers into E.164 format during ingestion, preventing downstream communication errors.

Company Name Canonicalization

Applied suffix stripping logic (Pvt Ltd, LLC, Inc) with normalization dictionaries.

Rule-Based Transformation Engine

Developed a rule engine allowing business teams to modify transformations without code deployment.

These scalable data standardization solutions significantly improved integration readiness.

We implemented a predictive inference engine running as scheduled batch jobs and real-time triggers. Models were deployed via containerized inference services.

Engineering Implementation

Null Pattern Analysis

Statistical profiling identified systematic gaps by source system.

Feature Correlation Modeling

Used supervised learning models trained on high-confidence datasets to infer missing attributes.

Predictive Completion Engine

Generated attribute suggestions but only applied updates above confidence thresholds.

Confidence-Governed Writeback

We implemented a safeguard mechanism: predicted values never overwrite verified fields.

This strengthened enterprise-scale AI data cleansing while maintaining governance compliance.

Development Architecture

Built as a validation rules engine configurable via metadata tables rather than hard-coded logic.

Rules were evaluated:

- During ingestion

- During updates

- During batch reconciliation

Implementation

Relational Mapping Tables

Maintained authoritative city–state–country datasets for validation cross-checks.

Postal Code Validation APIs

Integrated geographic validation libraries.

Attribute Dependency Engine

Validated field combinations (e.g., country must match phone code prefix).

Conflict Detection Workflow

Flagged suspicious records for quarantine instead of silent failure.

This strengthened systemic data quality management beyond surface-level validation.

We implemented an identity graph architecture using:

- Persistent global entity IDs

- Relationship storage

- Incremental reconciliation jobs

Implementation

Cross-Database Entity Linking

Built connectors for SQL and NoSQL sources.

Identity Graph Construction

Stored relationships in graph data structures for fast similarity traversal.

Incremental Reconciliation

Instead of full dataset reprocessing, delta-based reconciliation reduced compute load.

This provided durable support for enterprise data deduplication services across distributed platforms.

Designed a record scoring pipeline executed before records entered analytics systems.

Implementation

Activity-Based Scoring

Evaluated recency, update frequency, and engagement metrics.

Fake Pattern Detection Models

Trained anomaly detection models to identify bot-like or synthetic patterns.

Lifecycle Governance Policies

Defined automated archival workflows for obsolete records.

This enhanced preventive data cleaning services and protected analytical systems.

Implemented observability-first architecture with:

- Telemetry logging

- Validation metrics exposure

- Drift detection algorithms

Engineering Implementation

Real-Time Validation Hooks

Embedded validation triggers in ingestion middleware.

Quality Dashboards

Exposed metrics such as duplicate density, null ratios, and schema violations.

Automated Remediation Triggers

Triggered cleanup workflows when thresholds breached SLAs.

This converted data quality management from periodic audit to continuous governance.

We developed a scoring engine integrated with downstream systems via APIs.

Engineering Implementation

Weighted Validation Indexing

Each validation layer contributed to the composite reliability score.

Source Reliability Index

Maintained trust scores for each ingestion channel.

Decision-Readiness Classification

Tagged records as automation-ready, review-required and archive-eligible. This ensured that AI systems and automation tools consumed only high-confidence data.

Why This Matters for Enterprise-Grade Decision Makers

Modern enterprises cannot scale automation, analytics, or AI without robust data quality management foundations.

For CTOs: Scalability & Technical Efficiency

Reusable Validation Infrastructure:

Replaced fragile scripts with modular validation architecture supporting long-term scalability.

AI-Ready Data Pipelines:

Enabled structured AI data cleansing workflows to prepare high-confidence datasets.

Reduced Engineering Overhead:

Minimized recurring cleanup efforts through automated data cleaning services.

Cross-System Consistency:

Strengthened interoperability between CRM, ERP, APIs, and internal platforms.

For CEOs: Business ROI & Operational Advantage

Reliable Forecasting & Reporting:

Improved executive dashboard accuracy through continuous enterprise-wide data quality management.

Reduced Data Fragmentation:

Strengthened customer identity integrity using intelligent data deduplication services across platforms.

Lower Operational Costs:

Eliminated manual audits and correction cycles through automated validation.

Faster Automation Deployment:

Enabled confident scaling of analytics and AI initiatives through trusted, standardized datasets.

Results & Business Impact

99% Reliability

Major improvement in automation system reliability.

Duplicate Reduction

Significant reduction in duplicate entity creation.

Higher Completeness

Improved completeness across critical data attributes.

Lower Validation Effort

Minimized manual validation and data review workload.

Better Analytics Accuracy

Improved downstream analytics and reporting accuracy.

Conclusion

Instead of relying on periodic cleansing initiatives, Infomaze engineered a continuous, self-healing data ecosystem embedded directly into enterprise pipelines.

Through intelligent data cleaning services, scalable data deduplication services, advanced AI data cleansing, and automated data standardization solutions, the platform ensures that records remain validated, unified and trustworthy as they move across systems.

The result is not just cleaner data but a resilient foundation for scalable automation, analytics, and AI adoption across the enterprise.

Do you have a use case like this one?

Let us know! Our product experts can configure the best solution for your business.